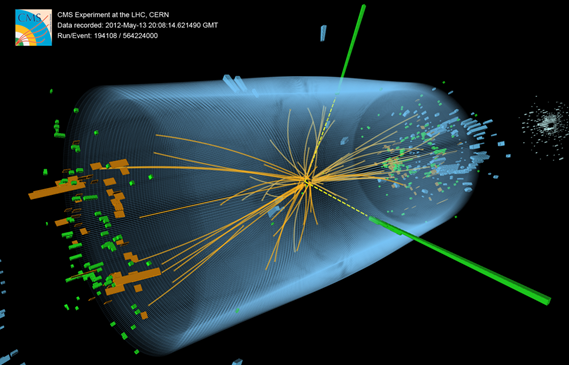

Collisions in the Large Hadron Collider (LHC) produce particles that decay in complex ways or into other particles. The LHC’s detectors record the path of each particle as a series of electronic signals and send the data to the CERN Data Centre for digital reconstruction: the results are saved as a collision event.

Up to a billion particle collisions can occur every second inside the LHC detectors. There are so many events that a pre-filtering system is needed to identify the most potentially interesting ones, but even so, the LHC experimentsproduce around 90 petabytes(90 million gigabytes) of data per year. By comparison,all the printed material in the world weighs approximately 200 petabytes. That means that, in a single year, LHC research teams must analyze data equivalent to nearly half of all the printed material in the world.

3D visualization of a proton collision recorded by the CMS detector at the Large Hadron Collider in 2012. Credits: Thomas McCauley, Lucas Taylor, and CERN. Source:CERN.

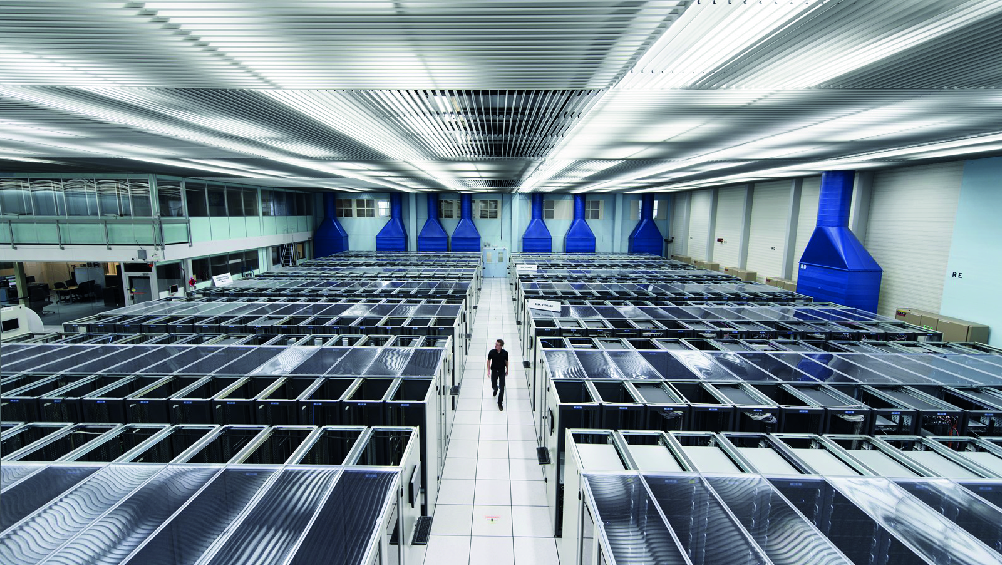

Such a large amount of data requires state-of-the-art data analysis tools. One of the tasks of the Saphir Millennium Institute's research team is to develop data analysis systems that facilitate the processing of the gigantic volume of information generated by the LHC experiments.